Summary: Users were given an option to share ChatGPT logs with a link. Nothing was put in place to limit access to anyone who had the link. Search engines can index them. Furthermore, once you saw a couple such links, you could easily guess about doing a site: web search which allowed you to peruse the corpus of all such logs.

How could professional programmers have left such a large security flaw?

It is such a predictable “user error” it’s hard to believe it was an accident of any sort. Lots of little diy FLOSS sharing service projects for text, pics, videos etc mitigate this by simple measures which can be discretionary or mandatory for the user:

- unpublish after set time period

- link can only be accessed x times

- require login with access granted to specific users

- require password, which could even be shared at the same time as the link in this situation

- discourage search engine indexing, bots etc (a bit hypocritical for chatGPT but should still be done)

Apparently they did nothing like this.

Am I crazy or does this convey a complete lack of giving even a single shit about people?

full text (screenshot images probably won't display)

From private chats to full legal identities revealed – internet users are finding ChatGPT conversations that inadvertently ended up on a simple Google search.

If you’ve ever shared a ChatGPT conversation using the “Share” button, there’s a chance it might now be floating around somewhere on Google, just a few keystrokes away from complete strangers.

A growing number of internet sleuths are discovering that ChatGPT’s shared links, which were originally designed for collaboration, are getting indexed by search engines.

ChatGPT’s shared links feature allow users to generate a unique URL for a ChatGPT conversation. The shared chat becomes accessible to anyone with the link. However, if you share the URL on social media, a website, or if someone else shares it, it can be noticed by Google crawlers. Also, if you tick the box “Make this chat discoverable” while generating a URL, it automatically becomes accessible to Google.

While OpenAI warns users not to include sensitive content in shared links, many didn’t seem to expect their private moments with an AI chatbot to end up being searchable on the internet.

Privacy nightmare: “WAY too many people getting freaky”

A simple Google site search using the unique shared link structure brings up thousands of indexed ChatGPT conversations. This is likely not the full count, as it takes time for Google to index conversations. Redditors have been actively sharing bizarre cases of private and even dangerous information that could be easily found by a Google search.

“I found some dude’s conversation about building a resume. It has his full legal name, phone number, email, location, and comprehensive work history,” wrote one Redditor.

“These convos are totally discoverable to anyone with the right search terms.”

“Found what looks like someone trying to encode a message to deliver a sketchy package to someone in the UK,” another Redditor wrote.

Users have stumbled across emotional outpourings, tales of trauma, and people asking ChatGPT about its feelings. Some conversations include email addresses, names of kids, and even home locations, as well as photos of the users and their voice messages.

“I found something similar… from a sex worker/influencer, where they doxxed their full name… I reached out on Twitter and said ‘hey your info is up there.’ They went berserk on me. Made me question my morality. But I did the right thing.”

“I’ve already found… WAY too many people getting freaky,” said another.

Cybernews has reached out to OpenAI for a comment, but response is yet to be received.

“Many cases discussed online involve exposure of personally identifiable data such as names and addresses,” commented the Cybernews research team.

“This information could be used to enable harassment or doxxing. If these conversations include controversial content, it could be weaponized for such harassment.”

researchers added.

OpenAI rushing to remove the feature

After this article was published, OpenAI’s CISO, Dane Stuckey, posted on X that the company is planning to remove the feature from the ChatGPT app starting tomorrow. “This was a short-lived experiment to help people discover useful conversations,” wrote Stuckey.

“Ultimately, we think this feature introduced too many opportunities for folks to accidentally share things they didn’t intend to, so we’re removing the option. We’re also working to remove indexed content from the relevant search engines.”

Stuckey said that the feature required users to opt in, first by picking a chat to share and then by clicking a checkbox before the chats were shared with search engines.

“Removing the share feature in the ChatGPT app may be an extreme way to deal with the problem. However, it’s great that OpenAI is working on removing shared content from search engine indexes, and hopefully, this will allow for faster removal of unintentionally shared content,” commented the Cybernews research team.

How to make your ChatGPT conversations private

When you create a shared link in ChatGPT, it publishes a static read-only version of the conversation to a public OpenAI-hosted page. This page can be indexed by search engines.

Deleting the chat in your ChatGPT account does not delete the shared URL page. The shared page remains live unless you explicitly delete the shared link. OpenAI explains on its help section that if you created a link that you no longer want to be public, you can delete the link or clear the conversation.

The conversation will no longer be accessible via the shared link, but if a user imported the conversation into their chat history, deleting your link will not remove the conversation from their chat history.

Even if you delete the shared link later via OpenAI, the Google search result may still show the page for a while. Clicking on the link would then result in a 404 error or “page not found” once the shared link is deleted.

If you are unsure if you shared any conversations in the past, you can go to ChatGPT settings, pick Data controls, then Shared Links, and remove individual conversations, or click on the three dots, and you will be presented with the option to delete all shared links.

“It is usually possible to mitigate this problem by modifying web crawler rules for your site, typically the “robots.txt” file, though, its been increasingly common to ignore these rules, especially by operators of LLMs, maybe thats why they chose to fix the problem by removing the feature entirely,” said the Cybernews research team.

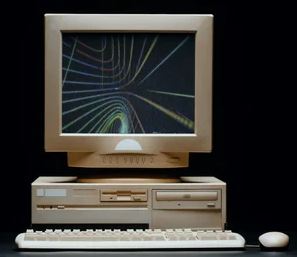

Screenshot by Cybernews.

Article updated on August 1st, with the response from OpenAI and comment by the Cybernews research team.

It has always been my assumption that all online slop generator logs are extremely not private

That’s a sensible assumption in general online. At the same time many people are having their trust abused heavily. Perhaps they “should” know better but they don’t, and systems should fail-safe so that ignorant or naïve users are not placed at risk.

Fuck everyone involved in this.

Not your platform, not your privacy.

I happened to be looking at the front page of reddit whatever day it went from 4 to 5, there were a ton of posts about it and it was largely folks who were upset that their buddy was gone, or distraught (not being coy here, actually distraught) that the new one was no longer personable. It was no longer friendly, and it was cold and impersonal. genuinely upset humans. I cant remember the individual comments but I remember just feeling bad for a lot of these folks. They are so alienated, and desperate for friendship, or validation, or wanting to be seen that I dont want to be snarky, I just feel bad instead. Theres some theory here that explains it im sure but im just lumpen enough to not know what it is.

Im not sure what percentage of usage is this, but its significant enough that articles are written about it and it is talked about in places beyond reddit dot com.

I use copilot because I’m too lazy to use google and I would almost pay money for it to present itself in a cold and impersonal manner. Its attempts to be “personable” and “affirming” are gross, off-putting, and immensely condescending.

yeah give me the star trek computer’s response format. terse and to the point, no fluff, no pretending it’s more than it is.

And in Majel Barrett’s voice, right?

And in Majel Barrett’s voice, right?

The only time these things have ever made me mad where when they were trying to be “personable” while fucking up. I don’t want a fake apology from the text generator, I want the text generator to just generate the text I asked for.

You: Find a quote from this article that most accurately encapsulates its thesis.

Slop machine: Sure thing! Here’s a quote from the article that is the most representative of its central argument: “Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat.”

You: That’s not a quote from the article, that’s just generic placeholder text.

Slop machine: My apologies, you are indeed correct in pointing that out. The quote is indeed not present in the original article. Here is an actual quote from the article that is most representative of its central argument: “Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat.”

Have actually had that exact thing happen, though it was from a novel, not an article, and it didn’t placeholder text, just quotes that weren’t actually from the novel itself. Also have asked it to provide x amount of words and it just didn’t give me the amount I requested, it wasn’t even close, and I was asking for 1000 words, not even like a full essay or anything, and it kept telling me over and over that it had provided me with the correct amount, while I could very easily wordcount it and see it was off by about 250 words each time. If it had just told me “I cannot generate responses longer than approximately 750 words” it would’ve been fine, I could’ve done a workaround with that, instead it kept telling me that 750 words was actually 1000 words. Maybe that’s why so many people worship at the altar of this new machine god, it is very, very confidently incorrect and people mistake confidence for accuracy.

No, I’m with you there. It’s just depressing seeing people driven to that level already. I thought we’d need at least a few more years of living in AI hell dystopia before we developed a subpopulation with LLM personalities as their only relationship.

I feel like once you get to that point, it’s really hard to bring someone back into IRL relationships or even just having normal conversations with others, even online. If you’ve grown attached to a thing that only validates you and pretends to like what you like, how can you be convinced to try experiencing real interactions with potential conflict and social awkwardness again? And every tech company in the world is funneling untold billions into making as many of us into these kinds of hermits as possible and preying on us for the rest of our lives. God, I hope this is a bubble that bursts so fucking hard because any long-term success for these AI “services” is incredibly grim.

Like, actually shocked? Or like ironic “Oh no way, the company that scrapes the internet for data for their autocorrect bot might not give a shit about personal security?”

Like, actually shocked? Or like ironic “Oh no way, the company that scrapes the internet for data for their autocorrect bot might not give a shit about personal security?”deleted by creator

it’s still a slop machine

but a slop machine with chinese characteristics

Slop

Slop Slop, China

Slop, China 对

对

deleted by creator

It is open source so they get a pass

feed the machine it’s own diarrhea

Digital Kessler Syndrome

i have a bridge to sell to anyone shocked by this

Yeah I mean this is what it looks like when 75% functionality is good enough for prod, you just push security risks like this and start the next moneymaking endeavor, and only address it if the lawsuit will cost more than the engineering to fix it. Modern software companies are designed to work this way, with total disregard for the user. The check is already cashed!

What lawsuit? What law?

there’s a copyright class action lawsuit against AI companies brewing

I just meant SW companies will only prioritize fixes and stability if their clients or users threaten to sue for breach of contract, information mismanagement, or some other damages.

Same idea with Boeing just silently installing software to paper over the fact that their engines didn’t fit their planes and killing 300+ people, calculated risk on their part which backfired, but still not enough to ground 737 MAXes or put them out of business or anyone in jail (maybe it’s this lack of consequences you’re referring to with your reply)

I am agreeing with you so aggressively that when you say

only address it if the lawsuit will cost more

I think you are correct, but I doubt the lawsuit could or will ever come.

only address it if the lawsuit will cost more than the engineering to fix it. Modern software companies are designed to work this way

Long running theme in the entire economy at this point

What an unmitigated disaster. Just everything around these things is being done in an unacceptably shoddy manner. Massive abused of public trust all round.

I’ve been struggling to think about an analogy for how incompetent this is.

The best I can think of is it’s like if a full adult clone of Typhoid Mary was placed in every commercial kitchen for a few weeks.

It’s just such a complete disregard of best practice. Mitigations for this are like built into many software libraries.

You only get here by actively trying to avoid study of state of the art, security review, consent training, and like any sense of professional ethics?

They use “move fast, break things” startup lingo to disguise that they’re moving fast so nobody notices their thing is broken.

oh its me! a shocked internet user! I can’t believe this!!!

How could professional programmers have left such a large security flaw?

Because it was made by “vibe coders”, not professional programmers

Deep in the screenshots: new innovations in internalized interphobia.

We really are so cooked that people are just like “yeah, fuck it, sure” to the unending comparisons of our bodies to science fiction concepts.